Terry Hancock (2005-7/26)

After reviewing several different options, and balancing our constraints on the engine against what's readily available, I've selected the following game engine components to be used for The Light Princess:

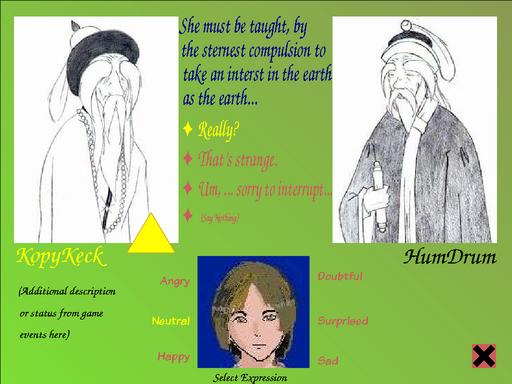

Some games have a specialized ``battle'' mode. In The Light Princess, however, all your battles are verbal, so it's appropriate that we have a specialized conversation or ``Talk'' mode. In this mode, you enter a full-screen interface where you communicate with between one and six non-player characters (we expect it to be very rare that you will talk to more than two characters at a time, but there will be a few larger conversations). Needless to say, you will only see one-person conversations in the D1D.

Characters can address you, or each other. Play is turnwise -- another character may opt to speak if you choose ``say nothing''. It's important to pay attention to your expression avatar, you have an option to change your expression (this does not take a turn until you speak). In order to speak, you must select your line from the available options. This delivers the line with an appropriate emotional content. Characters are programmed to respond to emotional expressions as well as lines chosen, so different reactions may result from different deliveries of lines. Note that a simple line like ``Really?'' in the example above can have a wide range of meanings depending on expression. The names for the expressions you can use at any point in the game are generated by the engine at turns and the options may change according to game play. Your character has a limited ability to falsify feeling, so when he is feeling particularly strong emotions, he will not have the full range of expressions available.

In The Light Princess you will almost always be playing the Prince (there are a few exceptions) so the player avatar will generally be of the Prince. It doesn't matter, though, because AutoManga will generate player and non-player avatars in the same way.

When there is more than one other conversant, you will default to addressing the last person who spoke (indicated by the large arrowhead). You can, however, change this by clicking on the avatar image of the person you wish to address. There should probably be another arrow to indicate who is being addressed by the current speaker. Because we are using AI agents, the characters may very well converse with each other -- they will be using bits and pieces of pre-scripted dialog intended for the speaker they address and some combination of generic responses.

The avatars in this interface are NOT static images (except in the D1D with the AutoManga stub). They are parametrically-generated avatars which reflect the emotional state (or reaction) of the character. The animation is controlled by ``face sliders''. The animator uses these sliders to create an ``emotional gamut'' (a set of key emotional states to be defined by the engine) which the engine uses as an interpolation reference for mapping the character's internal emotion registers to an external representation.

Actually, this is probably going to be a bit more complex than that, because a character may not always show you the emotion they are really feeling. Such duplicitous characters represent a challenge at the AI level that we'll have to solve.

Other things on the screen: the conversant's name appears below their avatar (if you know it -- otherwise, some label like ``girl on beach'' may show there). Below that is a status line. Extra game messages can be displayed there to allow awareness of events outside of the conversation, or to give you extra information from the conversant (e.g. ``She removes a key from her pocket and offers it to you.'').

There is also an exit button to return to the ``scene mode'', leaving the conversation.

Naturally, I intend that lines should be spoken aloud as well as appearing on the screen. I'd also like to create timings for each line to allow the avatar to speak in lip-synch (as a half-measure, the avatar could simply move its mouth while the line is verbalized and stop when it is not - most anime isn't lip-synched very well anyway, since the anime convention is to post-dub sound).

It is AutoManga that will be responsible for generating the avatar output. It needs to render individual SVG cels and composite them in real time in order to do this. The choice of cel is driven by the face sliders. Additional incidental animations ``breeze'' and ``jostle'' will provide some additional sense of activity - these are free-running sliders which the animator can attach to. ``Jostle'' occurs when a very rapid change of emotion is generated - you might expect a character to jump or ``start'' when that happens, and this allows the animator to put in subtle cues such as clothing shifting, to indicate this. Similarly, ``breeze'' is driven by ambient conditions, and simply gives some life to the character by allowing their clothes to shift as if responding to a breeze. Probably the breeze variable will respond differently in and out of doors and depending on weather conditions (it'll be a Scene object generator attribute). We might use the breeze variable to allow for the Princess's wild gyrations and disconcerting drift, as well.

AutoManga will also generate the player character's expression to give feedback from the expression options provided to the player.

For the walk mode, we will be using Soya 3D. A very good comparable would be Balazar (acting as our stand-in below), which uses most of the features we'll be needing from a 3D engine. It will serve as an excellent example for getting the code right. Our interface will differ in how commands are entered, inventory (probably use PyGame directly), etc. The characters in The Light Princess will be Cal3D character models, just as in Balazar. In order to simplify the task of creating lots of similar characters, most characters will be generated from a ``basic human'' template with dimensions, colors, and clothing parameters changed in a basic XML character description. Probably our main characters will be modeled specially, though. This modelling can all be done in Blender.

Very early in the development of The Light Princess project, I envisioned a menu-based ``sentence-builder'' interface for game play. I still like this idea, because:

``give coin to beggar''

Adverbs are optional, but again, they interact with the agent emotion system (there are some inanimate applications as well). So, for example, if you ``give coin nicely to beggar'' you may make more points with the beggar than if you ``give coin casually to beggar''. Likewise, ``hit nail hard with hammer'' may get different results than ``hit nail gently with hammer'' (and it's hard to say which is what you want!).

An interface very similar to this was used in the LucasArts SCUMM engine game Monkey Island, which I've played, so this is not purely theoretical -- I found that game's interface to be very usable and fun.

Clicking on the ``Inventory'' button will take you to a view of the items you are carrying. This is pretty no-nonsense adventure gaming material, so I'll just show a mock-up screenshot (clip art is by Stephane JOLY/Tatane, Pascual Andre, and Frederic Toussaint):

It's almost trivial, but there is another mode, too. When we ``look'' or ``examine'' an object up close, we will want to shift from the 3D view to a pre-prepared 2D image of the object. This is similar to talk mode, but the object will pretty much fill the screen. In these views, there may be placeable sprites representing objects which can be gotten or dropped. For example, when we look at the rosebush in the Queen's Garden, we will see several roses which we can pick (of course you can't put roses back).

This is a straightforward application of basic PyGame tools:

In order to retain a greater stylistic consistency, all of the modes share backgrounds. Here I've represented them with a simple floodfill, but in the game, they will be background images. These images will be taken from the room/scene description. Many rooms may share a single background image (all of the ``civilized woods'' scenes will probably have the same background, for example), but in principle, there could be one for every room. It is, therefore, also a reminder to the player of where they are in the game.

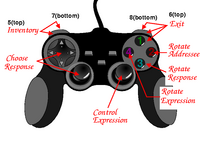

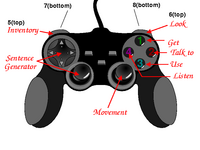

The game should be controllable with either the keyboard (with the mouse giving more control, but not strictly necessary), or with a standard Playstation-style controller of the kind that are readily available as USB peripherals for PCs nowadays (I got one for $15 recently). I've given one possible set of button-bindings for each mode, above, but probably game testing will show whether these are the best. I think this will improve the impression of the game to players. Existing Linux games that I've tried (e.g. Tux Racer, Balazar, Slune) don't seem to take full advantage of these devices, even though they technically support them. Programming joystick controls in PyGame is quite easy, so this should present no problems.

See the wiki for planning and implementation issues.